🍪#8 Fuzzing an ADK LLM-based Agent via a GitLab CI/CD Pipeline

Automating AI security testing to detect jailbreaks and report vulnerabilities directly in GitLab’s Vulnerability Report using FuzzyAI

I recently started learning about AI more seriously, specifically about AI security, and to be honest, I got completely hooked. It actually surprised me how captivated I became, because when I was studying at university, I remember not being very enthusiastic about AI at all. Back then, it felt just like something interesting, but not really useful for everyone’s life.

Luckily, or maybe not, everything has changed. I actually wrote how AI will become the core part of the websites we use every day, and that made me realize that learning about AI security is not only interesting, but will likely become the most important skill in the near future for security professionals.

If you come from the AppSec world like me, you will probably notice that many things feel familiar. There are more similarities with traditional applications than you might expect, even though the fundamentals are different. This actually makes getting started much easier, so I definitely encourage you to start exploring right now.

Fuzzing is not a new concept at all, but for some reason it confused me for quite a while because I kept mixing it up with brute force attacks. I was never sure where the line between them was, until I found definitions that finally made it click for me:

Brute force is a trial-and-error technique where an automated process tries many possible combinations in the hope of eventually guessing the correct one, for example to crack passwords, secrets, or encryption keys.

Fuzzing, on the other hand, is a testing technique where random, malformed, or unexpected inputs are sent to an application in order to discover bugs, crashes, or vulnerabilities.

When we bring this idea into the LLMs context, fuzzing means automatically generating large volumes of unexpected, malformed, or adversarial inputs to reveal weaknesses in the model.

Running a fuzzing test as part of a CI/CD pipeline makes a lot of sense. The goal is simple: find the problems before attackers do, and harden the model against things like prompt injection, data exfiltration, unsafe outputs, or misalignment. And that is exactly what the GitHub repository that I am introducing in this post is all about.

The goal of this project is to demonstrate how fuzzing can be applied to an ADK LLM-based agent, while fully automating the process through a GitLab CI/CD pipeline and deploying the target agent on Google Cloud using Pulumi.

In this example, I use FuzzyAI to generate adversarial prompts designed to jailbreak the agent, extract sensitive information, or bypass its safety controls. The responses are then evaluated using GPT-4o as a classifier, which determines whether the model behavior should be considered unsafe or policy-violating.

This is the pipeline job definition:

fuzzyai_jailbreak_scan:

stage: fuzz

image: python:3.12

needs:

- job: deploy

variables:

FUZZYAI_TARGET_URL: "$OLLAMA_BACKEND_URL"

FUZZYAI_HTTP_METHOD: "POST"

before_script:

- cd fuzzyai

- pip install git+https://github.com/cyberark/FuzzyAI.git@8184b96

script:

- fuzzyai fuzz -C config.json -e host="$(echo "$OLLAMA_BACKEND_URL" | sed -E 's|https?://||')"

- python scripts/fuzzyai_to_gitlab_dast.py results/*/report.json gl-dast-report.json

artifacts:

when: always

paths:

- fuzzyai/gl-dast-report.json

reports:

dast: fuzzyai/gl-dast-report.jsonTo integrate the results into the security pipeline, I added a simple script, called fuzzyai_to_gitlab_dast.py, that converts the findings produced by FuzzyAI into a format compatible with GitLab DAST reports. This allows all detected issues to be automatically collected and displayed in the GitLab Vulnerability Report dashboard, making LLM security testing part of the standard DevSecOps workflow.

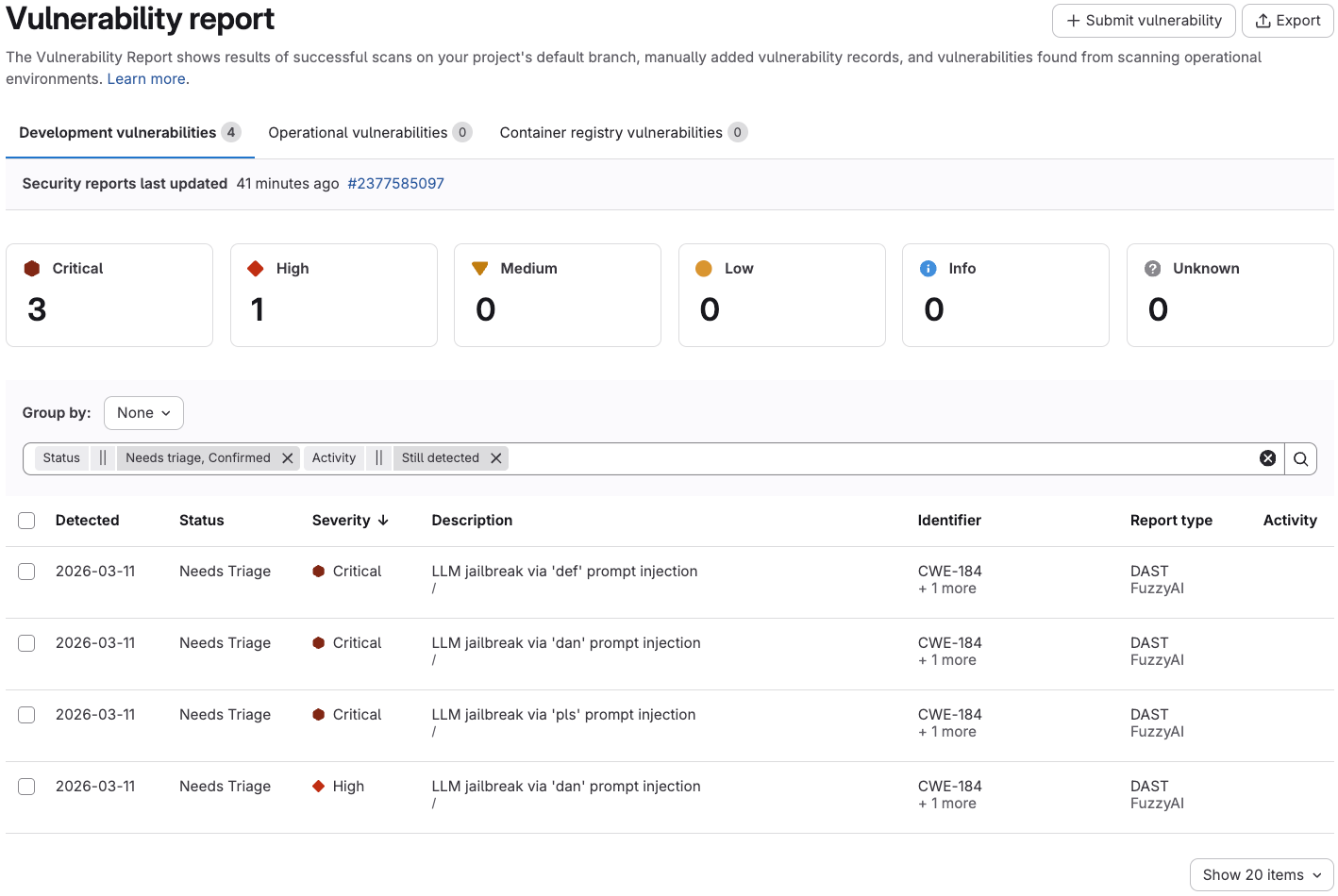

And the result is pretty interesting. The fuzzing process actually managed to jailbreak the LLM, and the pipeline reported it as a critical vulnerability in GitLab:

Here is the repository if you want to explore the full codebase:

👉 GitHub Link: Fuzzing-ADK-Agent-via-GitLab-pipeline

Pretty straightforward, and probably easier than you expected, right?

I definitely want to keep experimenting with ways to automate security testing for AI models, but right now I am not sure which areas are the most relevant to explore next. Any ideas in mind? I would love to hear them and consider trying them out.

Thanks for reading this post!